Jan 17, 2023

Rate Limiting and Throttling

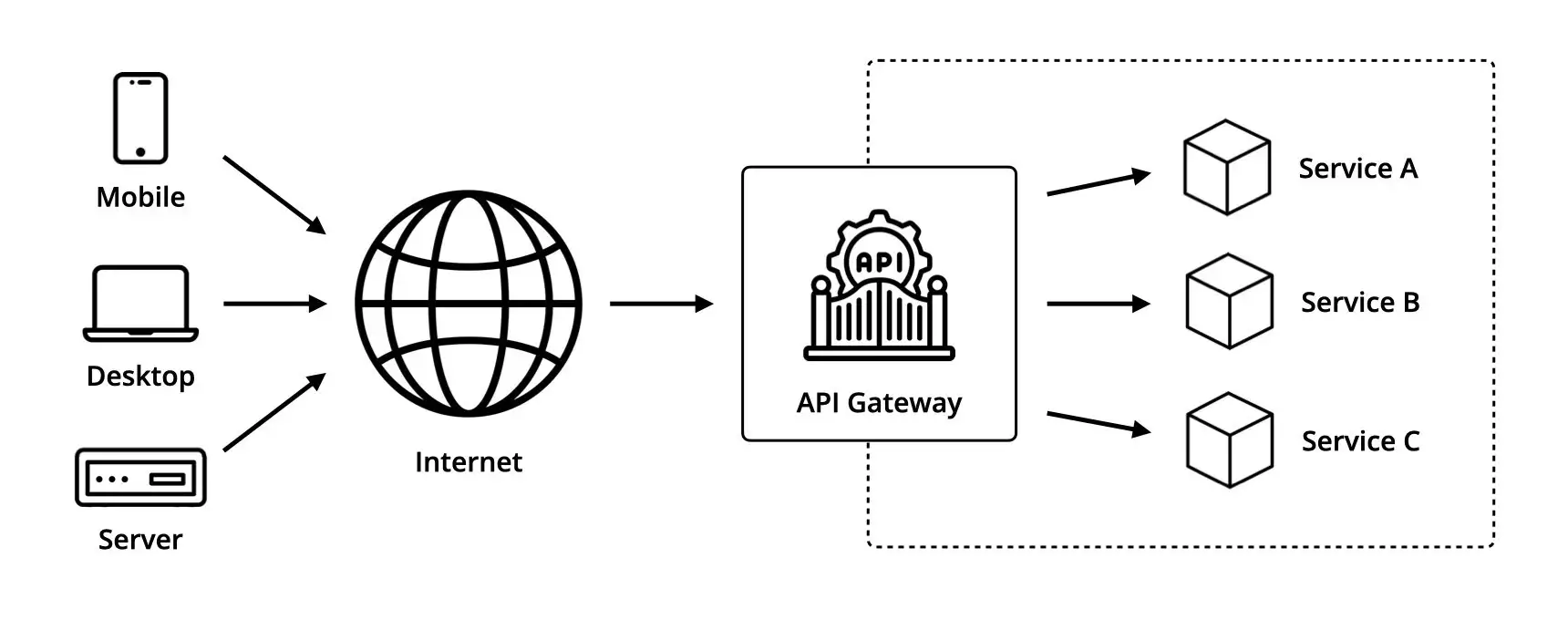

Rate Limiting and Throttling are strategies for limiting network traffic.

They are part of a wider range of policies named API management.

Even though they are both designed to limit API access, they essentially have different intentions.

Rate Limiting

Rate Limiting is a security measure meant to protect a service from malicious or excessive usage by limiting the number of requests a user or system can make to its API over a given period of time.

When the limit is reached, all requests that exceed it will be rejected by the API with an HTTP 429 "Too Many Requests".

These requests are usually tracked using either the IP address of the client or its API key.

Among other things, it helps protect a system against brute force attacks, denial of service attacks, or web scraping.

Here is an overview of the two most common Rate Limiting strategies.

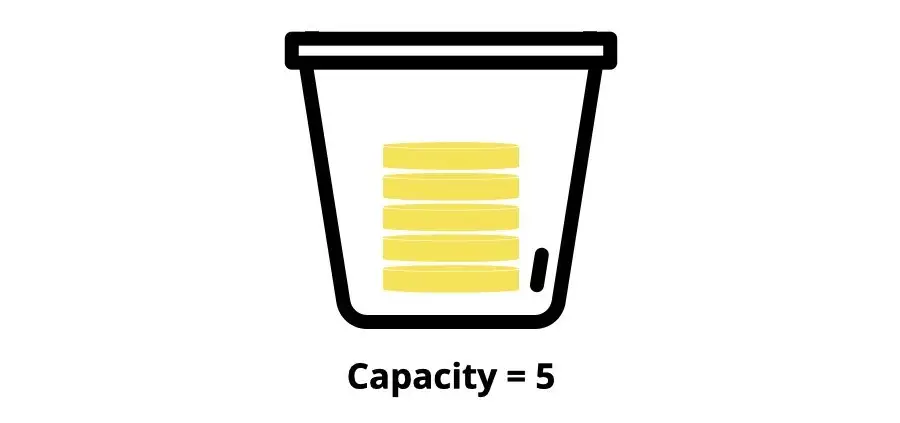

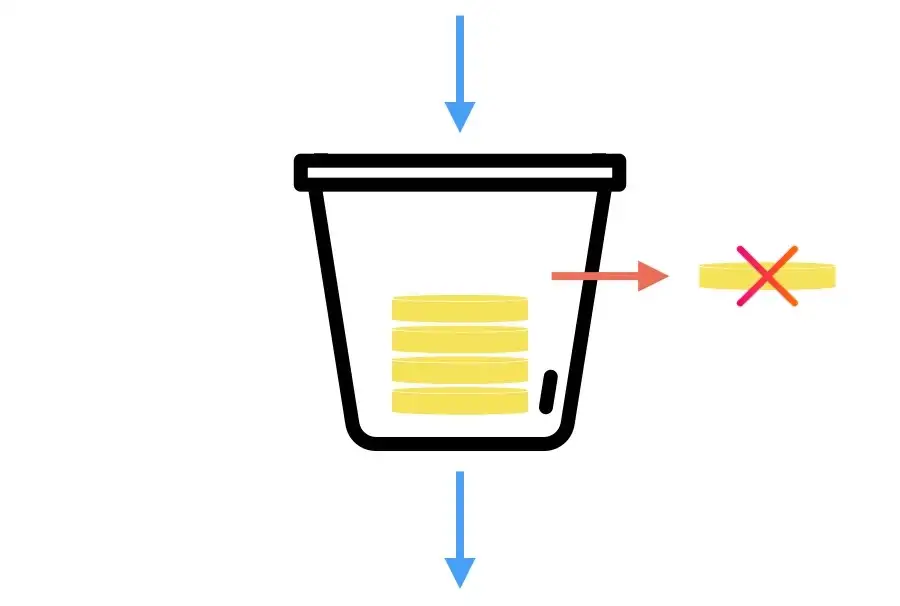

Token Bucket

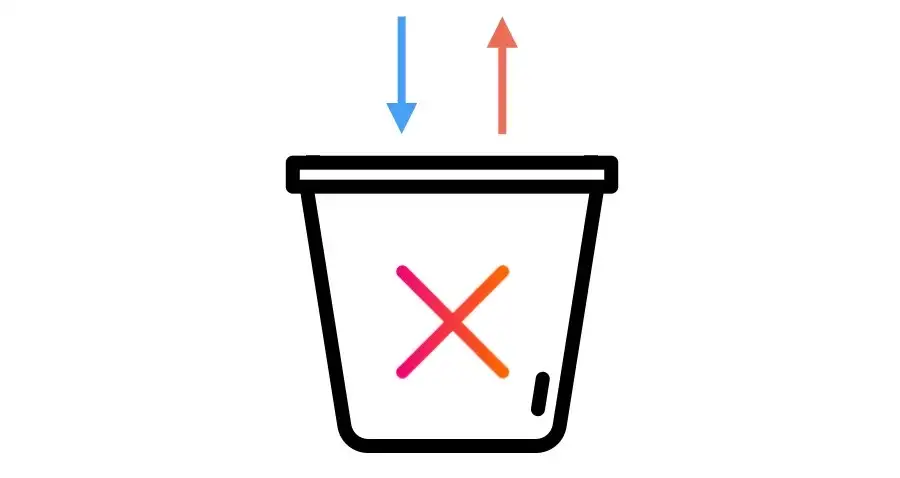

The token bucket is an algorithm that allows a maximum number of requests to be processed at once.

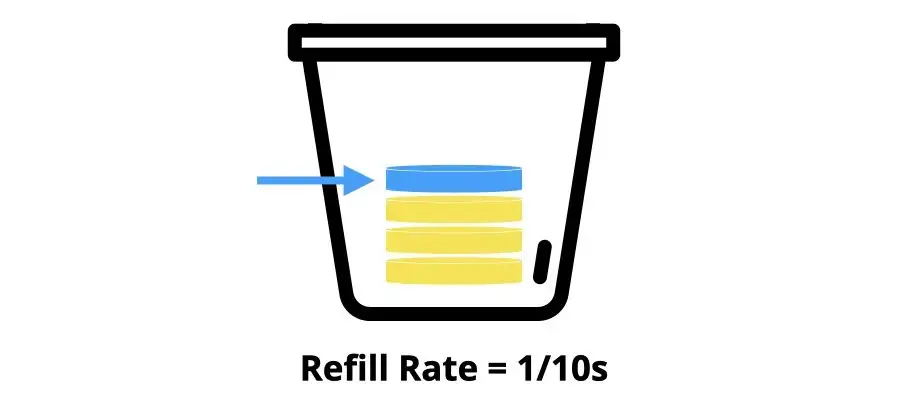

The bucket holds a certain number of tokens (e.g. 5).

When a request is processed, a token is removed from the bucket.

If there are no more tokens in the bucket, the request is automatically rejected.

At the same time, tokens are added to the bucket at a fixed rate (e.g. 1 every 10 seconds) while there is free space in it.

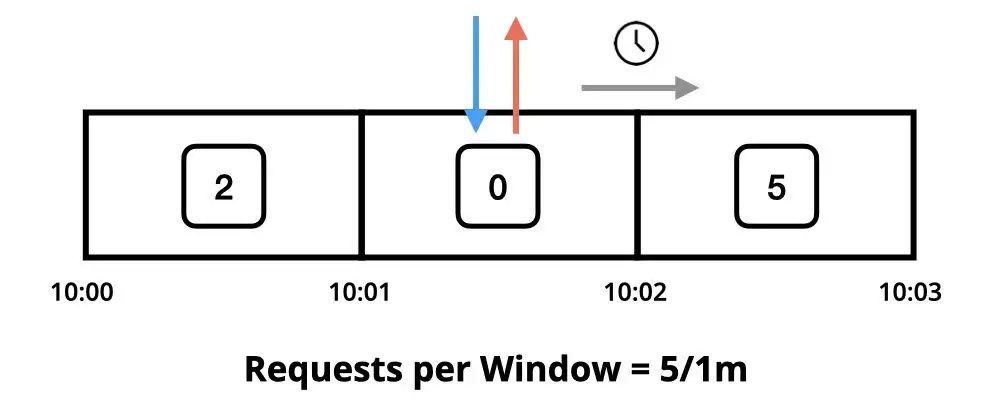

Fixed Window

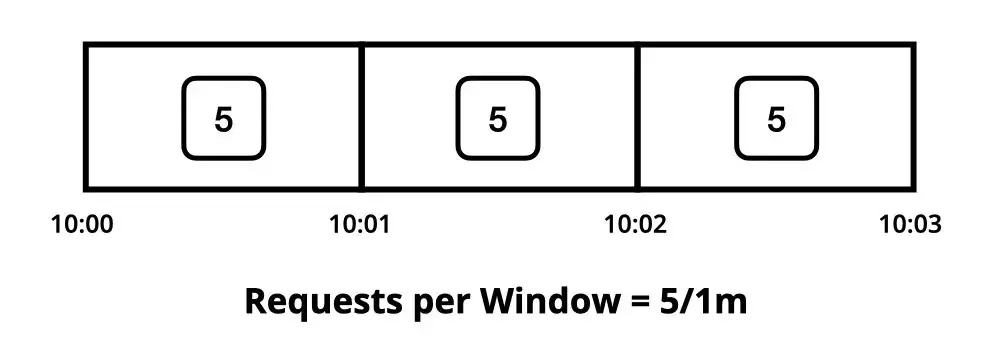

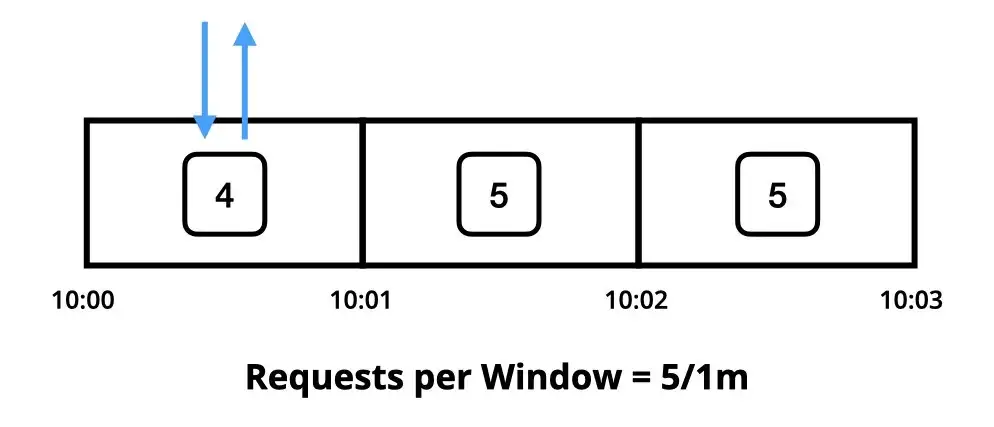

The fixed window is an algorithm that allows a maximum number of requests to be processed during a fixed time window.

The time window has an initial counter (e.g. 5 requests per minute).

When a request is processed within a specific time window, its counter is decremented by 1.

If the counter gets to 0, any further requests are blocked until the window resets.

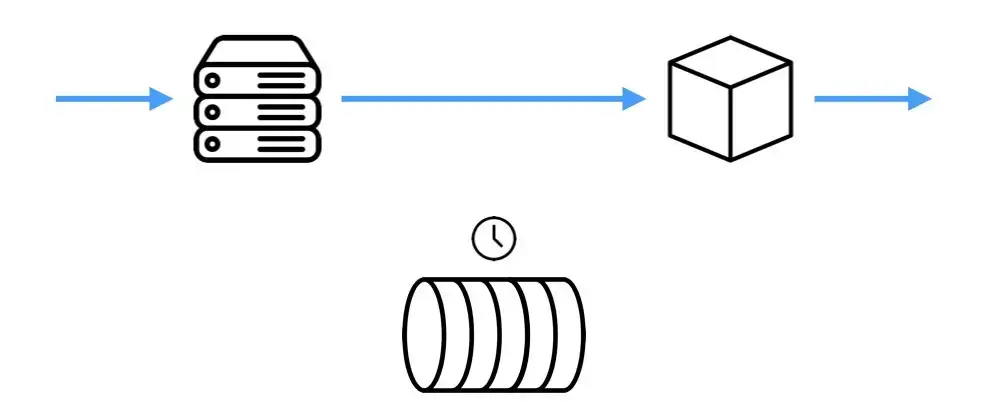

Throttling

Throttling, on the other hand, is a performance management technique meant to ensure the fair usage of a shared resource by controlling the amount of traffic the API can handle.

When the limit is reached, all requests that exceed it will be queued so that they can be processed in a subsequent window.

If a request cannot be processed after a certain number of attempts, it will be dropped from the queue and rejected by the API.

Here is an overview of three most common retry strategies.

Immediate retry

The application immediately retries the request once. In case of rejection, the application automatically switches to an alternative strategy such as regular intervals or exponential back-off.

Regular intervals

The application waits for the same period of time between each attempt. For example, 1 retry every 5 seconds.

Exponential back-off

The application waits a short time before the first retry, and then exponentially increasing time between each subsequent retry. For example, 1 retry after 1 second, then 3 seconds, 9 seconds, etc.